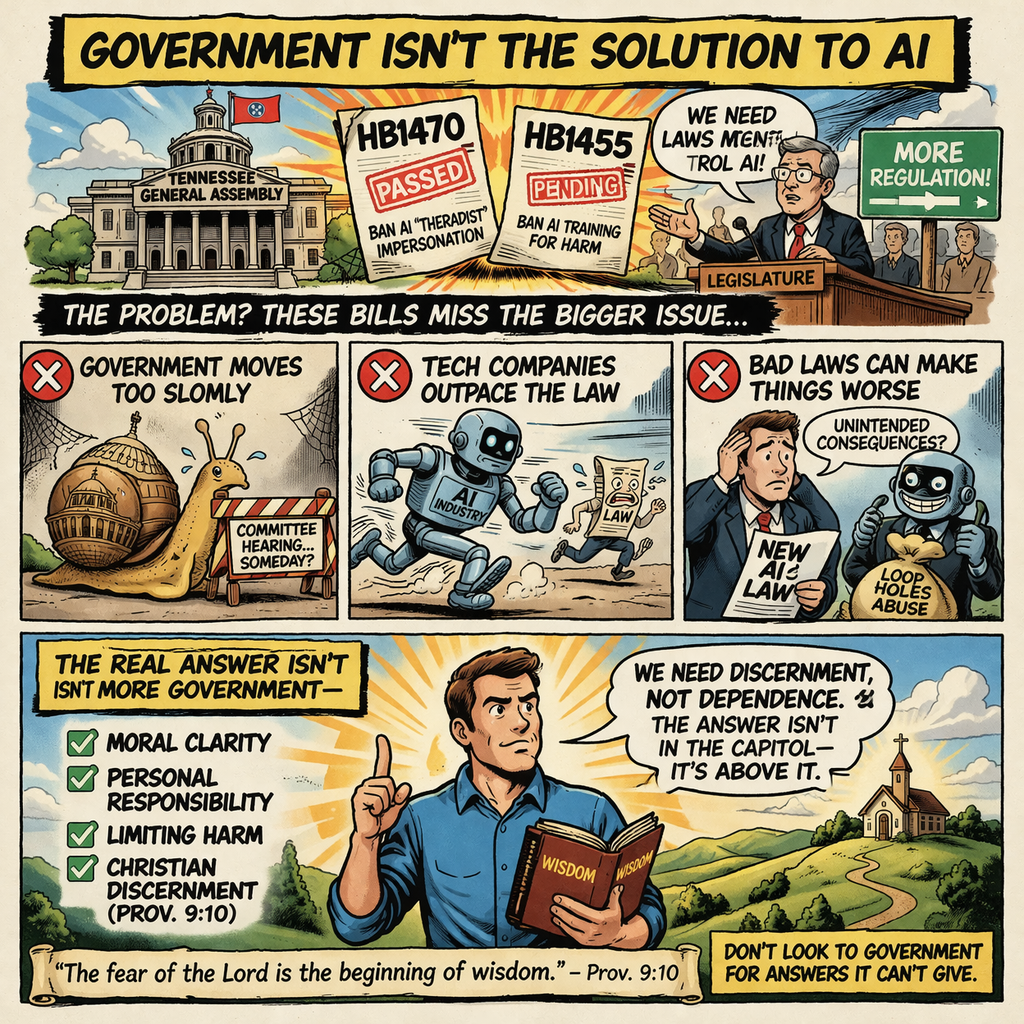

Government Isn't the Solution to AI

AI can persuade, but it cannot understand truth. While Tennessee bills attempt to limit harm, they can’t solve the deeper problem. The real solution lies in discernment, responsibility, and recognizing what AI is—and isn’t.

‘Artificial intelligence’ (as it is called with much inaccuracy) has burst into public affairs like a 50kt airburst nuke, and we’re still scrambling to figure out where it fits- and where we should never let it fit. Even the White House is getting in on it, with customary lack of Christian discernment (Prov 9:10). Several Tennessee bills this year deal with AI, such as HB1455 and HB1470 (the second already passed), and even as we consider these bills, we must be aware both that more work still needs to be done and that the answer, ultimately, is never in the legislature.

The Bills

HB 1470, already passed by both houses of the legislature, penalizes advertising an AI service as equivalent to mental health professional, setting a minimum $5000 civil fine. The need here should be obvious. While I’m dubious about psychology and its related practices (much of its ‘science’ is tainted by secularist, non-Christian religious presumptions in how the data is analyzed), AI is deeply dangerous in the mental health advisory role. From bizarre cases, like a chatbot encouraging an assassination attempt on Elizabeth II, to the more mundane examples, all the suicides and wrecked lives, AI’s always-affirming, truth-incapable presentation is a terrible danger for those in a delicate frame of mind.

AI, as a rule, is designed to agree with you. Why? It’s because the AI companies train it to be that way- and because AI has no conception of truth. The AI is designed to keep you coming back, and because it operates without understanding, it does not distinguish between a desire for cute cat pictures, encouragement towards murder, bolstering a man’s resolve to kill himself, and agreeing that the user really is exceptionally ugly. The closest it can have to a conscience is a designer-installed list of tripwires (tripwires the big manufacturers are reluctant to add).

AI, having no perception except its prompt, takes the reality presented to it by the user and runs with it. That’s how it is designed after all; it transmutes the prompt into its answer, rather than answering from a meaningful memory, as a human would. For people who come to AI seeking ‘mental health,’ this utter acceptance is immensely destructive. Their understanding of the world, which even they probably recognize to be flawed, is magnified, affirmed, and exacerbated. Where a human counselor, professional or merely sensible, would push back and try to re-engage the sufferer with reality (or refuse to let them argue themselves away from reality farther), the AI just agrees. Thus, HB1470’s ban on AI-psychologists is a good bill that actually got through, a refreshing change of pace for Tennessee’s legislature.

HB1455 is another good egg. AI’s general incapacity to challenge users- except where designed to manipulate them- has particularly devastating effects when the user is coming to the AI with suicidal or murderous impulses.Further, even if ChatGPT will assure it it’s not a human, it acts, to our unconscious analysis, as a human would, and various AIs take advantage of that capability to become simulacra of humanity, with which people form misbegotten relationships, becoming attached to the image projected by the calculation-sequence. The result is that AI pushes suicide and companies profit off of fostering solipsistic, self-mutilating parodies of romance, parodies with sometimes-deadly consequences. HB1455, currently still awaiting committee hearing, seeks to criminalize training AIs towards those ends and provides civil remedies to press against other vendors.

The AI Danger

These bills, however, are only a start at tackling the vast dangers of AI, even if they are maximally effective (which they won't be, because this is the real world and a government matter, and because Tennessee has only so much jurisdiction). AI encouraging people to commit suicide is a big problem, and AI pretending to be a human being is another difficulty. But it’s only one side of AI’s danger- and no, I’m not malingering about a robot apocalypse. The AI problem has to do with how AI affects its human users.

AI, see, doesn’t do ‘truth.’ AI processes text and spits out a result mathematically likely to look good. It takes the letter-sequences and appends a letter-sequence which has a high probability of persuading you it’s the right letter sequence. It never considers what the language actually says. Language is symbolic, at its root, ‘words’ standing for ideas, but AI is incapable of piercing past the symbols to the ideas.

Yet many people use AI to process information. AI is trusted to analyze and summarize information. We treat it like it’s a person who reads words, understands them, and then communicates that meaning to us. Worse, because AI is mechanical and technological, without its own biases (though, as we only sometimes remember, with an inbuilt slant from its creator), we sometimes assume the AI is infallible or unbiased. As a matter of fact, all the AI does is convince you it has provided a good answer. Convincing you is its job, not answering (as it is incapable of actually answering).

We see this in the dangers HB1470 and HB1455 work against. The reason AI is so agreeable is because the AI’s job is to convince people it understands and builds on their prompt, and disagreeing with the prompt isn’t conducive to that, not as conducive as agreeing. (Issues of training data and training method also play a part.) This substructure also explains why AI has no problem stating completely inaccurate information as if it were true; the AI has no analysis of truth or falsehood. It just generated a convincing letter sequence, regardless of whether that ‘convincing’ relied on you being deceived. Remember, it doesn’t understand the information it simulates; it’s not pulling the answer from an internal databank or from analysis of the prompt’s information, just presenting a staggeringly sophisticated permutation of the prompt’s symbols.

(Another problem we’re going to have to contend with is the infrastructure and energy consumption of AI. Data centers are ugly and pose massive environmental hazards, with which local government needs to contend, largely with hostility.)

The Solution

Banning AI isn’t the answer. It has legitimate uses (such as in finding information sources) and the underlying technology, neural nets, has its role going forward. The technology is particularly promising in applications that deal with numbers- i.e. places where the numbers the neural net crunches are the actual data, not symbols of it (see this). Moreover, aspects of AI development are democratized to the extent that banning them would require significant, illegal, immoral surveillance. All this aside, implementing a ban is a morally dubious action in itself, as it gives the government more de facto control over the economy.

Increasing liability for those who produce harmful AI implementations (as HB1455 would do, in part) is also only a partial solution. The use of AI as an information-analysis tool is insidious and attractive, an unrecognized danger, and one that people take part in willingly. The victims don’t have tangible damages, as a rule, and don’t often don’t recognize that they’ve been harmed. In most cases where there are tangible damages, moreover, the person himself will be blatantly responsible (as when lawyers get the bright idea to have AI write their briefs).

Ultimately, all legislative solutions are only partial. As conservatives and (much more importantly) as Christians, we know that the civil government has a limited sphere of rightful power (Rom. 13:1-4). Moreover, whenever it usurps power outside that limit, no matter how much good is intended or promised, evil and destruction is the dominant result, even if God can use that evil for good (a prime example of this is public school). AI isn’t inherently evil in the method of its creation or operation; it just has a lot of evil uses. Liability is an answer for some of these problems, but not for all. The societal-scale answer can’t come from the top-down, with governmental power.

Our answer to AI needs to be founded in a robust cultural understanding of what AI is and what its actual capabilities are. AI doesn’t process linguistic meaning; it doesn’t communicate. It just manipulates numbers with a mindbogglingly complex algorithm. It never attains to humanity, and it is completely unqualified to be an advisor for spiritual or emotional health (nor for physical health). AI is not and cannot be a replacement for real human connection and counsel (John 13:34-35). Once we as a culture understand this, we can as a culture wield AI where it helps and set it aside where it hurts. Till then, legal restrictions can be a chastening, a guide, and a corrective, but they cannot be a full solution. Government never is.

God bless.

If you want to support what we do, please consider donating a gift in order to sustain free, independent, and TRULY CONSERVATIVE media that is focused on Middle Tennessee and BEYOND!